n8n and Bright Challenge: Unstoppable Workflow

This is a submission for the AI Agents Challenge powered by n8n and Bright Data

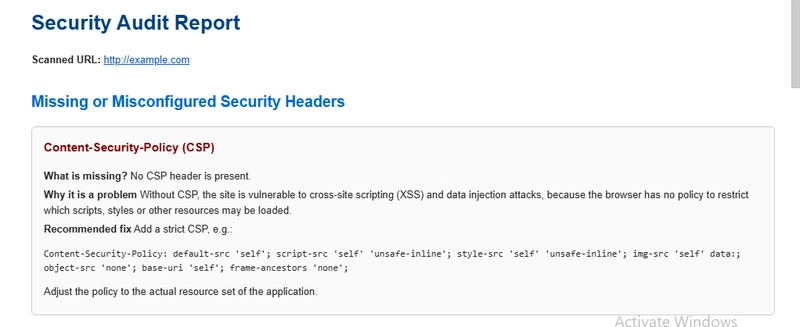

What I Built

Meet Bugsy — my AI-powered bug hunter. Bugsy works as an ethical agent that scans websites for common security exposures and gently waves a red flag when it finds something risky.

Think of it as a tireless security intern who never sleeps, never complains, and always documents what it finds.

The goal? To give developers and site owners an extra set of AI eyes to spot things like:

🔑 Leaky environment variables

🗝️ Accidentally exposed API keys

⚠️ Security misconfigurations

📂 Sensitive data accidentally exposed in public files

This is not about attacking systems — it’s about raising awareness and improving security hygiene in a world where even the smallest mistake can snowball.

Demo

n8n Workflow

Technical Implementation

Bugsy was built inside n8n, designed as a careful and ethical agent:

System Instructions: The agent is explicitly instructed to act only as a security scanner, focusing on detection and reporting (never exploitation).

Model Choice: gpt-oss-20b — chosen for its balance of reasoning ability and lightweight contextual memory.

Memory: Short-term memory for scanning logic, with no sensitive data retention.

Tools & Nodes:

Bright Data Verified Node → safe, compliant web data collection

Custom Logic Nodes → analyze response patterns, detect risky strings (e.g., AWS_SECRET_KEY=…)

Markdown/HTML Report Generator → produce clean, developer-friendly summaries

Bright Data Verified Node

This was the heart of the project. The Bright Data Verified Node acted like a seatbelt and airbag, ensuring Bugsy only interacted with public, accessible data — never crossing ethical or legal lines.

Journey

I started with a simple idea: what if an AI agent could act like a friendly security sidekick?

The biggest challenge was balancing power with responsibility. A scanner can easily become too aggressive or invasive, but Bugsy needed to be effective and ethical.

Highlights along the way:

Writing strict guardrails in the system prompt so Bugsy never attempts exploitation.

Leveraging Bright Data’s verified infrastructure for compliance and peace of mind.

The thrill (and mild panic 😅) when Bugsy actually discovered a live API key — which we responsibly reported to the site owner.

What I learned

security is often about small cracks in the wall that are easy to overlook. Bugsy shows that AI can help catch those cracks before someone malicious does.

Impact

For Developers → A safety net to catch mistakes before code goes live.

For Companies → Reduced risk of data leaks and misconfigurations.

For the Community → Promotes ethical AI use in cybersecurity.

Bugsy doesn’t replace professional security audits, but it provides a first line of defense — a friendly assistant to raise early red flags.

Challenges Faced

Ensuring scans remained ethical and non-invasive.

Balancing false positives vs. false negatives — tuning detection patterns so Bugsy isn’t too noisy.

Integrating n8n logic flows with AI reasoning while keeping performance fast.

What’s Next

Bugsy is just the start 🚀. Planned next steps:

Add scheduled scans with email/slack notifications.

Expand detection rules to cover CORS misconfigurations, directory listings, and metadata leaks.

Build a developer dashboard where reports can be stored, tracked, and prioritized.

Experiment with multi-agent collaboration (one agent scans, another verifies).

Closing Thought

Bugsy embodies the idea that AI can be a force for good in security. Instead of exploiting weaknesses, it shines a light on them — giving developers the chance to fix issues before they cause harm.