Hi there — I’m the Observer, a lifelong tinkerer with AI tools, creative workflows, and “what happens when advanced models meet real‑world constraints.” I’ve recently gotten my hands on a fascinating new foundation model and accompanying service, and I wanted to share my experience with the community at DEV Community (because yes, this audience will appreciate the nuances of engineering trade‑offs, creative workflows, and deployment realities).

In this post I’ll walk you through:

- Why accessibility matters for image‑generation models

- What problems many large models still leave unsolved

- How the model behind this service tackles those problems

- My firsthand take, including pros and cons

- What you might try next — and where you can dive in

Why accessibility in image generation still matters

When we think of generative image models, we often imagine: big model sizes, massive GPU farms, long inference times, and a relatively closed ecosystem. But here’s the thing: many creatives, product teams, indie developers and students don’t have the luxury of a 4×A100 rig. They need faster, leaner, more usable models. They need usable now.

Here are some recurring pain‑points in the field:

- High hardware or cloud costs just to launch something “good enough.”

- Slow inference or heavy latency that kill the creative flow.

- Flaky or poor text rendering, especially in non‑English contexts.

- Editing workflows that break when you ask for slightly complex, multi‑step instructions.

- Models that don’t “get” world knowledge, cultural context, or niche domains.

- Closed systems: models you can’t inspect, fine‑tune, or easily integrate into your own product.

So as someone who experiments with workflows, APIs, product integrations and visual pipelines, I found these limitations frustrating. I kept asking: “Is there a model that doesn’t force me into massive infrastructure yet still gives me real quality?”

Enter a new option: efficient, bilingual, real‑world ready

That’s where the model and service behind zimage.net come into view.

In brief: this is an efficient 6‑billion‑parameter image generation model (yes — 6B, not 60B or 100B) built to deliver photorealistic output, bilingual text rendering (English + 中文), and run comfortably on GPUs with ≦16 GB VRAM.

Here are the standout features:

- A “Single‑Stream Diffusion Transformer” architecture that unifies text, image conditions and latents for efficiency.

- Two variants: one for generation (“Turbo”) and one for editing (“Edit”)—so you cover both create‑from‑scratch and refine‑existing image workflows.

- Fast inference: fewer steps, decently low latency, enabling more interactive usage.

- Strong bilingual text rendering: if you’re designing posters, social assets, or multilingual visuals, that matters.

- Open release of code, weights & demo—so you can experiment, fine‑tune or integrate.

- Aimed at making high‑quality image generation more accessible — both cost‑wise and infrastructure‑wise.

My experience using it

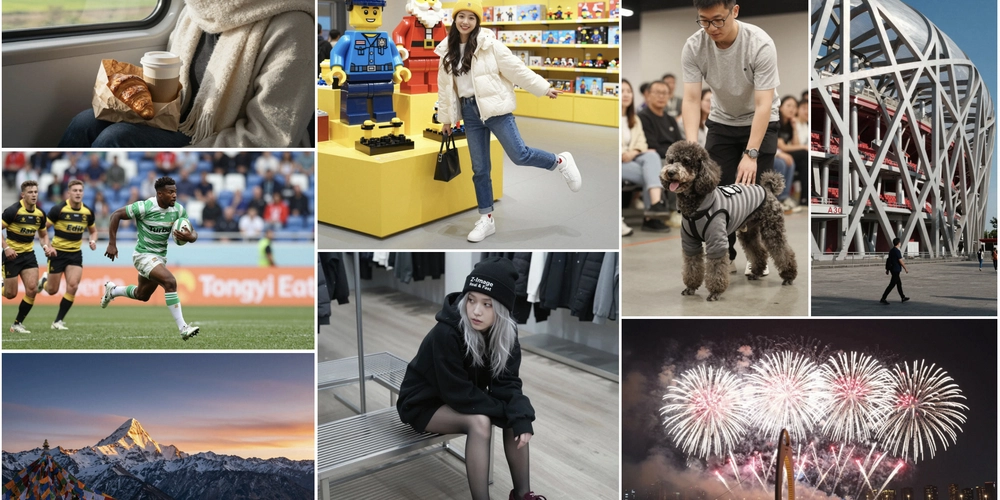

I spent some time testing typical workflows: generating product concept visuals, bilingual social‑media graphics, and editing existing imagery with complex instructions. Here’s what stood out.

What I liked

- It felt snappy. Because the model is leaner and optimized, I wasn’t waiting minutes for each image—more like seconds.

- Text rendering (English & Chinese) was far better than in many similarly‑sized open models I’ve tried. Typography, layout, and clarity held up.

- The editing mode was surprisingly consistent: I could ask “change the jacket to blue, switch to snow scene, keep face expression happy” and it did a solid job.

- Because the service (via zimage.net) is freely accessible (for what I tried), the barrier to starting was very low.

- For developers or makers, the open weights + code give confidence it’s not “just a black box SaaS.”

What to watch out for / caveats

- Though impressive, it’s still not “perfect” in every scenario—extremely niche domains or ultra‑fine typography still challenge it.

- Depending on how the free tier / service limits are set, you may hit usage or performance ceilings if you scale production.

- As with any model, prompt engineering still matters: a good prompt yields far better results than a generic one.

- If you need ultra‑massive resolution or enterprise‑grade throughput (1000s of items per hour), you may still need to evaluate infrastructure scaling.

Why it’s worth the attention for creators & engineers

For engineers building image‑generation features into apps, startups or internal tools, this kind of service/ model combination is compelling. You can prototype fast, deploy something lean, test whether your users actually need “mega‑scale,” and iterate.

For designers/marketers/creatives, it lowers the “can I even try this” barrier. No need for 8×A100s or API costs at scale (at least initially) — you can experiment, generate ideas, iterate more quickly.

For educators, students, indie makers — again: it enables you to visualise ideas, multilingual assets, educational materials, storyboards, prototypes, without waiting weeks or burning budget.

What I will try next — and what I’d like to see

Here are some ideas for how I’ll use this going forward:

- Integrate the model into a small internal tool for our design team: bilingual poster generator + brand asset automator.

- Deploy the model (weights) locally for offline workflows or custom fine‑tuning on our niche dataset.

- Build a prompt‑template library (for the team) so non‑AI folks (designers, marketers) can plug‑and‑play.

- Use the edit mode for creative variant generation: take one base image and iterate style, mood, text overlay, language.

- Measure “time to useful visual” (generation + iteration) vs our old workflow and see how much time we save.

And here’s what I wish to see from the service in future:

- Expanded prompt/template galleries: ready‑to‑use prompts for common tasks (product mockups, social posts, bilingual posters).

- Deeper tutorials: best practices for editing mode, prompting bilingual text, handling tricky layouts.

- API / integration options for embedding into products.

- Usage analytics: how many iterations, how many edits, which prompts perform best.

- Community‑shared gallery of results, so you can browse what others built and learn from them.

How you can try it too

If you’re curious, go check out the site: zimage.net. You can generate, edit images, and see how the workflow fits your needs. Because the entry barrier is low, you don’t need to commit huge budget or hardware upfront.

Final thoughts

What strikes me most is the pragmatism of this offering. It recognises that “real world” creators—whether engineers, designers, indie makers—need usable tools, not just “state‑of‑the‑art at any cost.” The fact that you can get good quality, bilingual text rendering, editing and generation, and do it on reasonably modest hardware (or via a hosted service) makes this model & site worth bookmarking.

If you’ve been hesitant about using image‑generation models because of cost, complexity or hardware constraints, this might just be the opportunity to test, iterate, and ship visuals faster.

I’ll be sharing results from my workflows and what I learn in the coming weeks — if you try it too, I’d love to hear your thoughts. What prompts worked for you? What use‑cases surprised you? Let’s keep the conversation going.